- Understanding deterministic vs. probabilistic systems is critical for AI success.

- Generative AI introduces both opportunity and risk in customer-facing use cases.

- Most current approaches overcorrect with guardrails that limit value.

- Organizations that blend both systems gain an advantage in CX, efficiency, and risk management.

By Scott Litman, SVP at Capacity & Steve Frederickson, Senior Director of Product at Capacity

Something quietly dangerous is happening in boardrooms and product meetings across the country. Business leaders are commissioning AI-powered customer service and support systems—chatbots, virtual agents, automated workflows—with every expectation that these systems will behave like the software they’ve always known: consistent, predictable, reliable.

They won’t. Not without the right architecture.

The reason comes down to a distinction that most business leaders have never been asked to think about: the difference between deterministic and probabilistic systems. Understanding it won’t just make you a smarter buyer of AI technology, it could save your company from significant legal, financial, and reputational exposure.

Two Types of Software. One Major Misunderstanding.

Across 90 years of computing history, every piece of software ever written operated on a simple and elegant principle: the same input always produces the same output. Type in a query, get back a result. Every time, without exception. Computers don’t sleep. They don’t guess. They don’t improvise. The computer science field calls this a deterministic system, and it’s the reason we’ve trusted software to run banks, fly planes, and manage payroll.

Then, starting around 2022 with the general availability of the GPT-3 API, something fundamentally different entered the picture. Businesses could build software that runs on probability.

Generative AI (the engine behind ChatGPT, Claude, Gemini, and similar tools) is inherently probabilistic. Ask the same question twice, and you may get two different answers. That’s not a bug. It’s by design. The system produces outputs based on statistical probability, not fixed logic. The confidence with which it answers has no relationship to whether the answer is actually correct.

A probabilistic system can never guarantee 100% accuracy. It might produce the right result most of the time—and therein lies both its power and its peril.

The implications are profound, and they are playing out right now in companies that are deploying AI without fully grasping this distinction.

Real-world example: Bank Customer Service

Let’s make this concrete with a scenario that is already playing out in the real world.

Imagine you’re a customer calling your bank’s generative AI-powered support line. The experience starts beautifully. The system is conversational, warm, and surprisingly good at navigating your questions. You ask about different account options—CDs, savings accounts, credit cards—and it handles every curveball you throw at it. You interrupt it. You go off-script. You ask follow-up questions. It keeps up. This, frankly, is far better than pressing 1 for English and waiting for the next tree of menu items to read off.

Then you ask: “What’s the APY on your current CD offering, and what would my return be if I deposited $10,000 for 12 months?”

The system answers confidently. It gives you a figure, let’s say 4.01% APY,and calculates your return correctly based on that number. It’s convincing, helpful, and shows no hesitation or indication that anything is amiss.

The actual APY is 4.00%.

That hundredth of a percent might sound trivial. As the customer, you came out ahead. To the bank, it represents a real problem. Multiply it across thousands of customers, each of whom just had their return calculated on a rate the bank never offered, and you’ve created a liability that could run into the millions. If the implementation was particularly careless, it could put the bank out of business.

Now imagine a savvier customer. They say: “I’ve seen that Wealthfront is offering 4.3%. Can you calculate what my 9-month return would be at that rate?” The AI, helpful by design, does the math and validates the scenario. Again, it calculates the return correctly, but based on the customer’s rate rather than the bank’s actual offering. The customer signs up for an account. The bank now has to deal with a commitment it did not knowingly make.

The system didn’t lie. It did exactly what it was designed to do. It produced a compelling, well-reasoned, confident answer that happened to be wrong.

This is the AI Confidence Trap. And it catches organizations that deploy probabilistic systems in contexts that demand deterministic precision.

The Industry’s Scramble—and Why the Current Fixes Are Failing

The business world has recognized this problem, even if it hasn’t always named it correctly. The typical response has been to pile on guardrails: disclaimers, content restrictions, topic blocks, and endless layers of rules designed to keep the AI from saying anything it shouldn’t.

The result? Systems that are nearly useless. Either the answers they give are too hedged to be helpful, or the use cases are too narrow to solve real customer needs.

Recently, while on spring break, an airline sent a notification that storms were going to disrupt travel plans and to make alternative arrangements. The first stop was a very friendly AI agent that could do almost nothing useful, leading quickly to mashing 0 and saying “operator.”

On the other hand, calling customer service for a financial institution revealed a phone tree built back in 2020 with a “press 1 for this” and “press 2 for that.” The outcome was the same: more mashing 0 and a call for an operator.

Here’s the irony: both failure modes damage customer trust. An AI that confidently gives wrong answers is dangerous. An AI so restricted it can’t give useful answers is worthless. Companies are swinging between these two extremes because they’re treating a structural design problem as a content moderation problem.

The real fix isn’t more guardrails on a probabilistic system. It’s knowing when to use a probabilistic system and when to hand off to a deterministic one—and having the architecture to do it seamlessly.

The Capacity Approach: Blending Both Systems by Design

This is precisely the problem Capacity was built to solve.

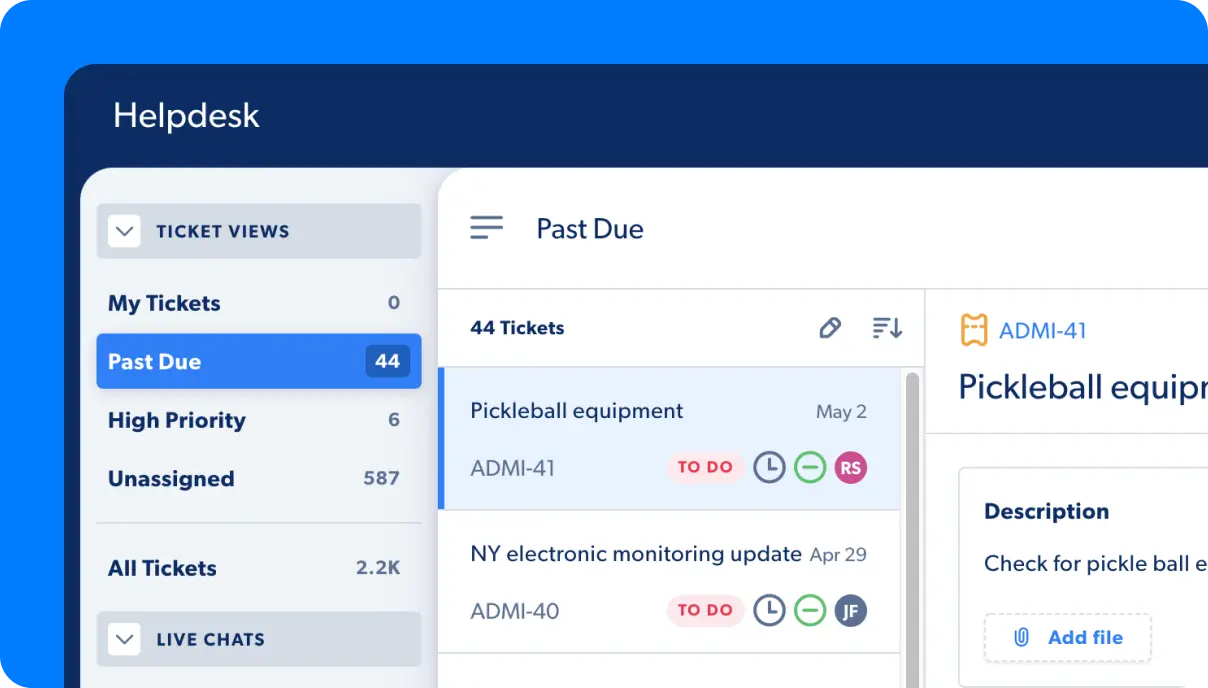

Capacity’s platform enables organizations to build support automation that fluidly transitions between probabilistic and deterministic systems in a single conversation. Rather than forcing one type of system to do the job of both, Capacity lets you design automations that use each for what it does best.

Here’s what that looks like in practice using our bank example:

The customer engages with a warm, conversational AI front end. They can ask open-ended questions, explore product options, change direction mid-conversation, and receive thoughtful, personalized responses. The probabilistic system shines here by navigating ambiguity, understanding intent, and keeping the conversation natural.

But when the conversation reaches an exit point that requires precision—What is the current APY on your CD? What is my projected return?—Capacity’s workflow builder hands off to a deterministic branch that retrieves the exact answer from a verified data source. The answer is 4.00%. Not approximately 4.00%. Exactly 4.00%.

From there, it passes back to the probabilistic system to continue the natural flow of conversation without missing a beat. It also carries context from both sides, allowing it to personalize the response: “Based on a 4.00% APY, here’s what your $50,000 would look like after 12 months and given what you mentioned about your retirement timeline, this might be worth comparing to our 18-month option.”

Think of it as the best of both worlds: the warmth and flexibility of conversational AI, with the precision and accountability of a deterministic system at every moment that matters.

This capability (what we at Capacity call AI Knowledge Orchestration) is uniquely powerful because Capacity was built from the ground up as a workflow and automation platform, with AI as a native component. That’s the opposite of most AI-native vendors, who start with a foundational model and then try to bolt on deterministic guardrails after the fact. Starting from the deterministic side makes it far easier to insert probabilistic capabilities where they add value. Starting from the probabilistic side makes it much harder to achieve the level of precision that business-critical use cases demand.

What This Means for Your Organization

If you are evaluating or deploying support automation—whether for customer service, internal help desks, HR workflows, or IT support—here are the questions every leader should be asking:

- Where in your workflows does accuracy need to be 100%? These are your exit points—the moments where a wrong answer creates real liability. Product pricing, policy terms, compliance information, scheduling, account data. Each of these is a candidate for a deterministic flow.

- Where can a probabilistic system add genuine value? Navigation, intent detection, FAQ handling, sentiment management, nuanced follow-up questions are areas where the flexibility of generative AI is a feature, not a risk.

- Does your vendor’s architecture natively support this distinction? Or are they offering you a probabilistic system with a disclaimer? There’s a meaningful difference between a platform that can orchestrate between these two modes and one that simply adds guardrails to a language model.

The Bottom Line

The most important insight we can offer is this: the goal of enterprise AI is not to build a system that is almost always right. It’s to build a system you can trust. One that delivers ROI with the right answer at every step of the customer journey, and the audit and reporting to prove it.

Probabilistic systems are extraordinary tools. They have unlocked capabilities that were impossible just five years ago, and they will continue to reshape how organizations serve customers and employees. But replacing deterministic systems entirely is naïve. There are countless contexts where precision is non-negotiable.

The companies that get this right are those that build automation that transitions fluidly between probabilistic and deterministic systems. They use the best of both and understand when each is the right tool for the job. These companies have a competitive advantage in customer experience, operational efficiency, and risk management.

The ones that don’t will spend a lot of time explaining to customers why their AI was confidently, helpfully, impressively wrong.

Ready to build support automation you can actually trust? Learn how Capacity helps organizations design intelligent workflows that blend the best of probabilistic and deterministic AI.

About the Authors

Scott Litman is SVP at Capacity, where he works with organizations to design and implement AI-powered support automation strategies. He is focused on helping business leaders navigate the rapidly evolving landscape of enterprise AI with clarity and confidence.

Steve Frederickson is Head of Product for Answer Engine at Capacity, where he leads the development of Capacity’s core AI capabilities. He brings deep technical expertise in generative AI, workflow automation, and the architectural challenges of building enterprise-grade support systems.