How humans in the loop and data labelers strengthen data quality for machine learning and customer success.

In its simplest term, data is just a collection of information. Both humans and computers gather data on a daily basis to make judgements that guide us through our decision making process. This could be as simple as waiting at an intersection for the light to turn to green, or collecting a user’s phone number to pull up information about their account.

Unlike computers or machine learning models, humans are innately good at simultaneously collecting information through various processes. This could mean picking up on nonverbal cues, like a head nod, or forming a response based on context. This is why there’s often a human behind the curtain teaching the model what information is relevant and what can be tossed out and disregarded. Without a Human in the Loop (HITL) the set of data can get a little out of hand. It’s sort of like a data daycare, still noisy, but less sticky.

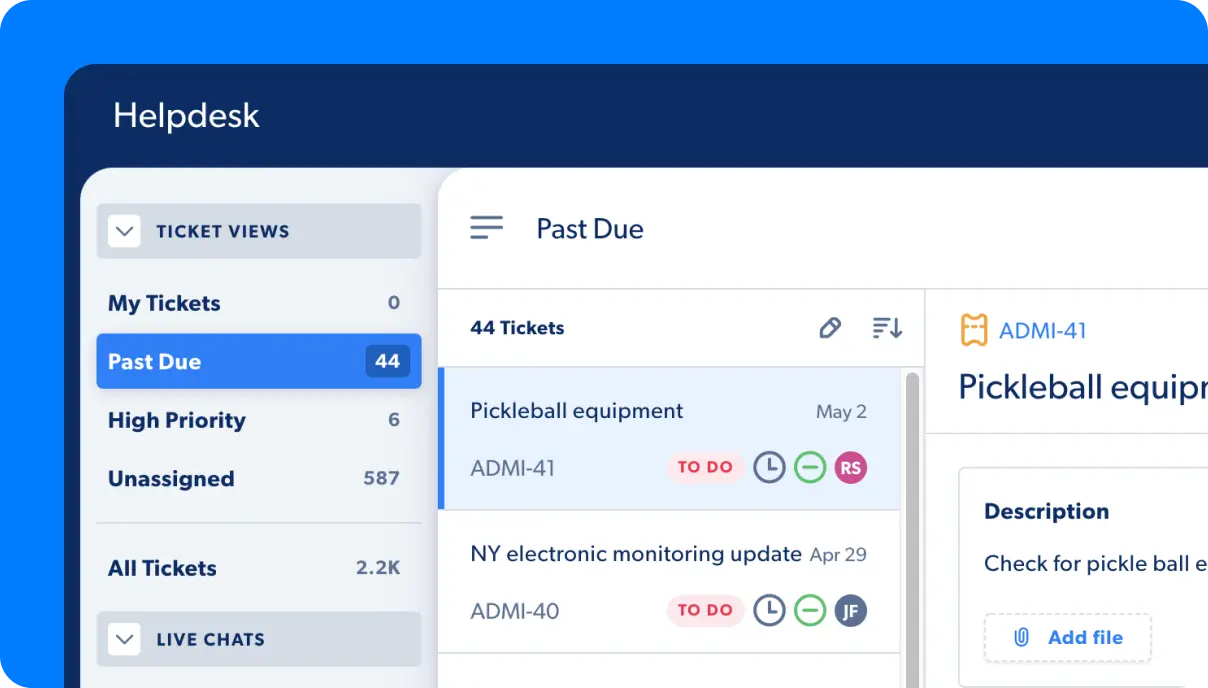

After graduating from phase one of data daycare, the training wheels come off, and the information is mapped directly into the knowledge base and incorporated into the model. This is what allows bots, like Capacity, to direct users to appropriate responses based on what they’re requesting. A customer may need a quick answer about a company policy, a link to a document, or the status of a project.

Why we need human decision making.

Answers and the response themselves are important, but what’s even more valuable is how the conclusion was formed. Sometimes, even as humans, we can form an answer but struggle to explain our logic as to how we got there. For example, we know that butterflies can fly, but we might find it hard to describe how they do it. How do we distinguish a butterfly from a moth? How do we know our mother’s voice from a stranger’s? Part of analyzing data and training a model is breaking down decision making into tiny steps.

Teaching a computer is similar to teaching a child, except children still learn math by counting on their fingers (and sometimes continue to as adults), and humans rely on computers to help them make calculations. Computers are at a disadvantage because they aren’t able to receive information simultaneously the same way a human would. Through most of our interactions, we’re able to directly pick up on information visually, audibly, and even subconsciously. This is why it takes time to break down our logic and teach the machine learning model a more literal way of mimicking the complex ways that humans process information.

One of the more difficult barriers that computers encounter in the decision making process is context. How can we teach a model context when we sometimes struggle with the same issue ourselves? When the customer replied with “Oh great” did the customer mean that’s good, or was the customer being sarcastic? Luckily, that’s where HITL and Data Labelers come in. The two groups work alongside one another to infer what the customers’ needs are based on logs, conversations, and other captured data.

The data labeling process.

Similar to how we learn as children by mirroring the behavior of others or copying the actions of our older siblings, machine learning models need to know how to behave. Data labelers and HITL are there to not only ensure that customers are getting the correct response, but also to train sets of data and minimize errors. Because the same question can be asked multiple ways, it is the Data Labeler’s goal to make sure these questions result in the same response.

This can be in the form of creating a list of questions but structuring each one differently, or looking for ways to communicate the same idea, but each question is semantically different. In a similar way, this can also be done by labeling, yes, these two questions mean the same thing, or no, these are unrelated. Even customers themselves are able to participate within the concierge to provide a form of similar feedback. Was this helpful? Thumbs up. Insert Kris Jenner holding camera, “You’re doing amazing sweetie.” Was this wrong? Alright, thumbs down.

After looking through user feedback, we can further fine-tune and validate results by asking ourselves if the customer’s feedback looks correct, or if they responded in error. Just like humans, computers need positive reinforcement.

Sentiment analysis is a good example of how data labelers are able to piece together context to interpret a customer’s emotional response. Were we able to help an upset customer find what they needed, or is there something missing that could have improved their experience?

Aside from these tasks, other data labeling projects could include categorizing data into specific clusters (e.g., is this inquiry healthcare-related or would it fall under finance?). Other ways of annotating information could involve using tools to select objects within an image or extracting relevant information from a paragraph.

Crowdsource, outsource or in-house labeling?

Choosing the right method is important. You don’t want to send your data off to daycare in an overpriced Gap sweatshirt, only to have your child return sporting a shrunken turtleneck with a tag that has some other child’s initials Sharpied into it. How could this happen? Low quality, lack of accountability, or no clear vision as to how the annotated data will be applied. What’s the bigger picture?

Crowdsourcing is a popular way to categorize and label data. Mechanical Turk is a crowdsourcing platform owned by Amazon that allows virtually anyone to perform tasks that computers aren’t yet smart enough to comprehend. This platform has grown in popularity by both organizations and individuals because of its affordability and ability to hand off short, relatively easy tasks and have them quickly completed by others. Workers log in and sift through jobs, Human Intelligence Tasks (HITS), which could range anywhere from answering a survey about your preference for frozen pizza to identifying images of stop signs in a series of photos.

According to a 2017 study led by Kotaro Hara, a professor at Singapore Management University, Mechanical Turk workers earn approximately $2.00 an hour. As workers race to stack up earnings from each HIT, the quality of results are often unreliable. There is also the chance of encountering other bots that have been programmed to quickly complete tasks. Unfortunately, these bots pollute datasets and deliver poor results.

Information can be outsourced to companies that specialize in labeling data. These companies may have their own unique programs to label data, with employees who are familiar with what to look for. One of the possible downsides is that they may not see the bigger picture for each company’s vision, or be able to offer unique insights for suggestions or corrections.

Capacity employs its own in-house data labelers who are familiar with the clients we service, their individual needs, and how we can improve our data to increase efficiency, accuracy, and customer satisfaction.

Humans in the loop.

As we work to train the model to produce the best results, humans are there to fill in the gaps along the way. Questions that need to be answered are mapped to an appropriate response, or new exchanges are created and added to the knowledge base.

From there, we’re able to send a response directly back to the customer who requested it. As new questions are formed and exchanges are created, we’re able to capture that knowledge, label it, and train the model further; providing customers with the best results.