The White House’s New AI Executive Order Explained

Earlier this month, The White House released an Executive Order to govern the development and use of AI. The Executive Order summarizes the government’s view on AI and how it should be regulated and developed moving forward. In short, the order looks to encourage innovation and growth rather than over-regulate emerging technology—empowering the United States to remain a world leader in AI.

The order, released by the White House Office of Science and Technology Policy (OSTP), includes 10 guiding principles for companies to adhere to, and ultimately encourage innovation and development of new technology.

Here at Capacity, we’re experts in AI, so we’re glad to see these types of regulations are being put in place. But, we know not everyone will understand how to read between the lines and understand why they are important. Here are a few thoughts to help you better understand what each principle means—the throughline I noticed? Trust is key to the development of AI technology.

Public trust in AI.

The first point in the executive order is pretty simple—people only want to work with and use technology that they trust. It’s a critical element when developing new technology because everything you do hinges on building trust. Yet, trust is incredibly hard to earn and even more easily lost. That’s why public trust is essential in the early stages of a company – it will make or break your company’s future.

American leadership in artificial intelligence, particularly in the private sector, is keenly aware of how critical trust is to their success.

Public participation.

What this means is that the public should be given the chance to provide feedback in all stages of the rule-making process. It’s important to allow the public ample opportunity to be involved because it creates a stronger sense of trust in a company.

Scientific integrity and information quality.

Any decision concerning AI research and development should be based on scientific information. This is a critical element because quality information is not only important for the decision-making process but it again goes back to the very first point of building trust.

Risk assessment and management.

When developing new technology like AI, it’s important to be mindful of the risks associated, because most AI risks are manageable if planned for upfront.

Benefits and costs.

As with any technical project, AI or otherwise, the benefits and total cost should be considered from the beginning. Mapping out the benefits and costs associated when developing new technology will help your company immensely because you are creating an upfront guideline.

Flexibility.

Flexibility is essential to maintaining a system that scales – creating a rigid AI system won’t be successful in the long run. Technology changes rapidly and a critical element of success is to be able to roll with the punches so to speak and adapt to changing priorities.

Fairness and nondiscrimination.

One of the biggest criticisms about AI is that the algorithms created by humans could be biased. When the training data set is biased, bias perpetuates existing bias. For this executive order, it’s critical that companies within the American AI initiative commit to removing as much bias when developing new technology. At Capacity, we believe that it is imperative that the AI works off a data set that fully represents the organization, rather than a small subset.

Disclosure and transparency.

Directly correlated with building trust is the idea that companies and organizations should be transparent. It should be transparent about why decisions are being made and no one within an organization should ever be completely in the dark.

Safety and security.

Security is at the forefront of all IT investments. Because AI is built around artificial neural networks, the algorithms used often include sensitive data that require heightened privacy—such as biometrics, (faces/fingerprints, etc.). Keep in mind that new risks require new solutions, and with great power comes great responsibility. As cyberattacks continue to grow in volume and complexity, AI is actually a resource for companies to manage security and safety better.

In time, economic and national security will be heavily reinforced by emerging AI technology. Federal agencies have already begun adopting these tools, and more will soon follow suit.

Interagency coordination.

Lastly, communication is crucial when developing new technology. If your employees and developers are not all on the same page, eventually a misstep will be made.

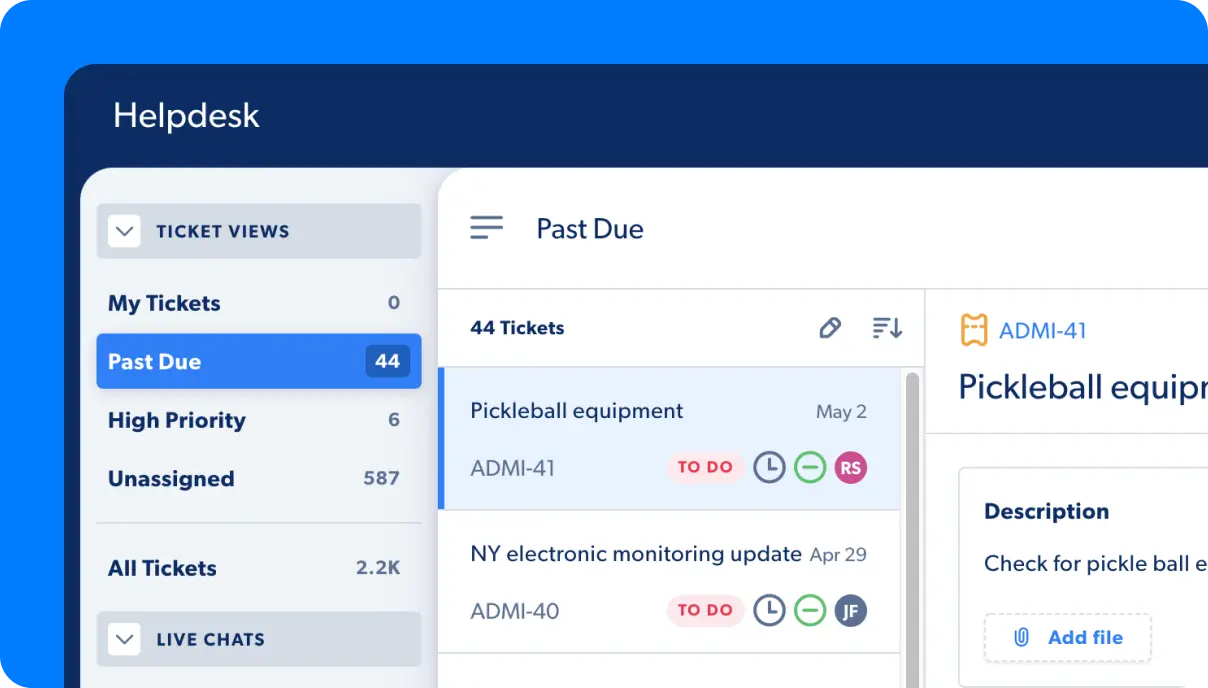

Whether you realize it or not, artificial intelligence (AI) has begun to impact every aspect of our lives— from our home lives to the way we work. Voice assistants and chatbots bring us the answers we need in almost real-time, and organizations are now beginning to leverage that same technology to bring the convenience of consumer experiences to the workplace.

We are constantly thinking of ways to leverage technology to solve existing pain points and as a result, technology is developing faster than ever. This new executive order acts as a guidepost, allowing continued American leadership in the field of AI development.