In a previous blog post, I described how advances in machine learning techniques are driving natural language processing forward at incredible speeds. Today, I’ll talk through the details of some of the most important of these innovations and how we think about applying them at Capacity in our conversational AI platform for the enterprise.

At Capacity, we use a flexible natural language chat interface as a gateway to the entire universe of company intelligence by:

- Integrating with the APIs of enterprise systems like Google Suite, MS Office, Salesforce, Workday, and more.

- Mining the structured and unstructured text information from documents like company websites, employee handbooks, reports, FAQs, and more.

- Capturing tribal knowledge that doesn’t currently exist in any electronic system because it’s floating around in peoples’ heads or on post-it notes stuck to their monitors; things like onboarding procedures, wifi logins, lunch favorites, keyboard shortcuts, the date of the company holiday party, and more.

(Before rebranding, Capacity previously did business as Jane.ai.)

In order to build a system that can reliably and accurately do all these things, we must master many specific NLP sub-tasks. Tasks like: information retrieval, named entity recognition, part of speech tagging, sentiment analysis, topic modeling, and question answering. The state of the art for most of these sub-tasks has come to involve machine learning algorithms, which are in turn combined and ensembled with business logic and/or an additional layer of machine learning algorithms.

Let’s dive into the first level of these NLP innovations: word vectors!

Word vectors.

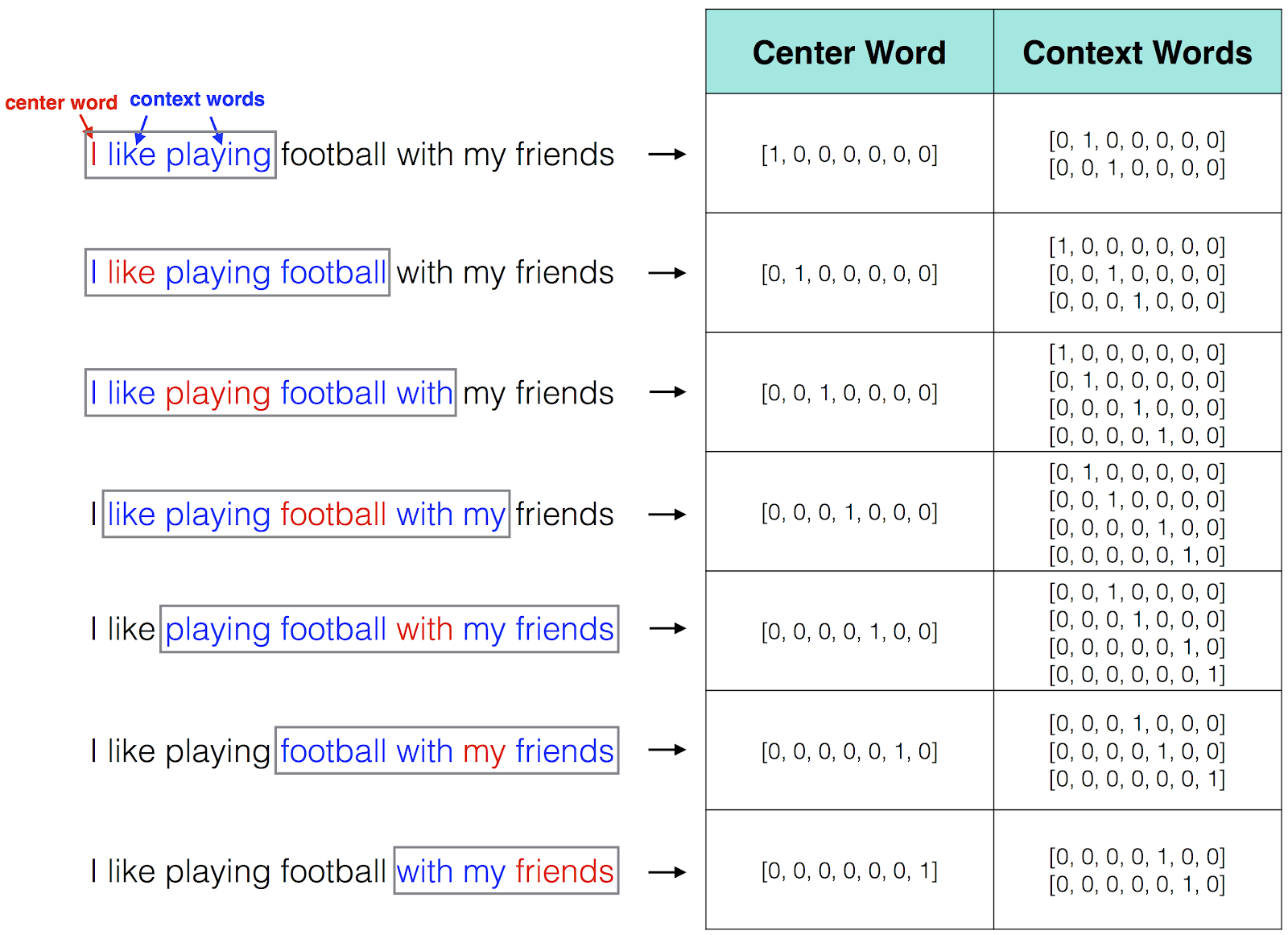

Word vectors are a list of numbers that represent a word. Word vectors have been used in the past for relatively small bodies of text by doing simple frequency counts or co-occurrence calculations, but the development of the Word2Vec algorithm in 2013 was a major step forward for word vectors. This machine-learning algorithm automatically reads through giant datasets of text and learns the pattern of associations and relationships between all the words inside that dataset. As illustrated in the figure below, a sliding window is used to scan through the corpus and create a 1-hot vector (only 1 value in the whole vocabulary is non-zero) to represent the center, or target, word. Simultaneously, the algorithm creates vectors to represent each context word.

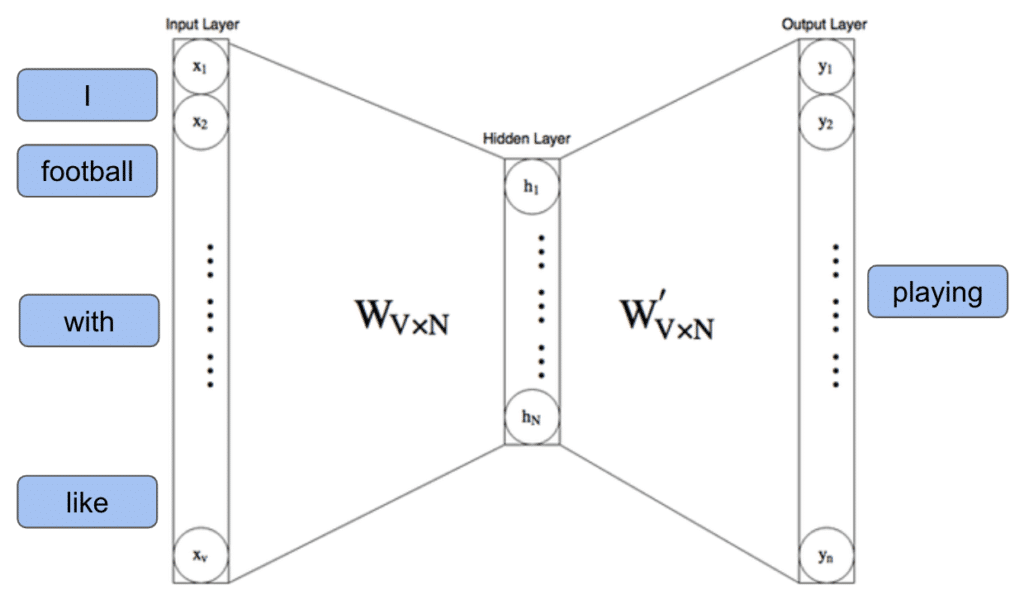

The next step is to treat the target and context word vectors as the input and output of a neural network. The Word2Vec algorithm uses a simple, one-hidden-layer network like the one pictured below. This hidden layer compresses the information from the input and output datasets into a smaller dimension to create a compact “embedding” or “encoding” of the learned vocabulary. The simple example pictured above only has a vocabulary of 7 words, but a real example might take a larger dataset with a vocabulary of 10,000 unique words and force it to funnel all of that information through the calculations of a hidden layer that is only of length 300. In this way, a single target word out of 10,000 possible inputs affects the 300 values in the hidden layer, such that it then expands back out to make predictions of the context words in the symmetric 10,000-length output layer.

A neural network with a single hidden layer like those used in the Word2Vec training algorithm.

Once you train the weights and parameters of a Word2Vec model, you can pull out the inner guts of the model—simply the 300-length vector associated with each word from the hidden layer—as a static representation of that word which can be used for other calculations and representations completely independently of the original model.

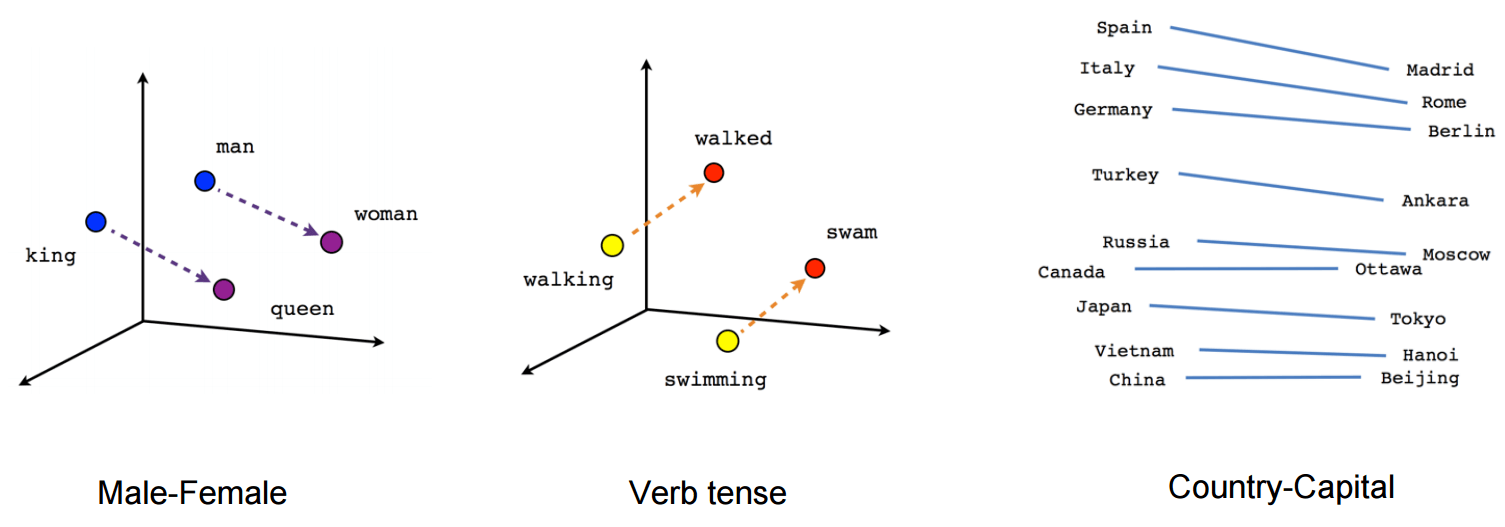

These word vectors (aka embeddings, aka encodings), trained on the mathematical and statistical relationships between words in huge, billion-plus-word corpora, have led to great advances in the ability to search, find semantic similarity, judge sentiment, and many other language tasks. It effectively packages a large pre-trained model that can be used to build on and transfer-train from. Below is a classic visualization of some of the amazingly quantitative properties of word vectors.

The classic and obligatory visualization of some of the relationships encoded in word vectors.

Unsupervised learning.

One of the most powerful things about this type of learning from text is that it is unsupervised. In contrast to many types of machine learning datasets, there is no labor-intensive step where the input and output data must be reviewed and labeled by a human. In my previous article, I mentioned how the example of manually labeled street signs, pedestrians, and other objects for autonomous vehicle algorithms have been crowdsourced using CAPTCHA tasks. For cleverly designed NLP models, the data is separated into input and output automatically. In the case of Word2Vec, it is simply by using a sliding window that recognizes word placement in any text…and there are untold terabytes of text readily available for models like this to train on. If there’s one thing Civilization has been good at in the last few decades, it’s generating lots and lots of machine-readable text.

Beyond word embeddings.

With the foundation laid for effective, unsupervised machine learning of word-level patterns, there has been a natural progression of models for words, then models for phrases, then models for sentences, and more. Researchers are trying to throw a net over any and all relevant context features. Applying different neural net architectures has proven helpful, as various approaches have shown promise for various tasks.

For example, recurrent neural networks like GRUs or LSTMs have a built-in memory component that can learn to remember or forget certain information from prior training examples, rather than simply relying on the information in the current training example. This has proven very useful for NLP since it has many so-called “long-range dependencies.” The following two sentences have dramatically different meanings that could not be detected unless you can attend to the beginning of the sentence and the end of the sentence simultaneously:

“Elmo wants to sit on the bench next to Bert.”

“There is no way Elmo wants to sit on the bench next to Bert.”

Another industry advancement that helps with this and considerably moves the needle forward for NLP is the Attention Mechanism. This is a way to dynamically “attend” to different portions of the input and to the model layers within itself, based on the current, observed characteristics. For example, Attention will learn to weigh adjectives more heavily if a model is training on a task to predict positive or negative sentiment; while another model training to book travel might learn through Attention to weigh times and places more heavily.

It’s all about finding weights, folks. Finding the weights for different connections and patterns that occur as you change perspectives on the data. This is how machine learning works: the input is ingested, a predicted output is produced, and the error of that prediction is compared to the true or gold-standard output for that training example. Gradient descent and back propagation math allow you then to automatically comb through the model and make tiny little tweaks to all the weights that will make that prediction a little more accurate the next time you iterate over it. The training cycle then proceeds to the next example.

In essence, this is what human learning is all about, too! You need to pick up the problem and rotate it around. Zoom in and out. Look what came before and what comes after. Iterate. Wash, rinse, repeat.

ELMO model.

An exciting language model that uses both LSTMs and Attention to advance the state of the art is the ELMO model, or Embeddings through Language Modeling. (Notice the Sesame Street theme that seems to be surfacing?) Again, like Word2Vec, this model is pre-trained on a cleverly designed reading task and produces vector embeddings as a static representation of language information that is portable for building upon by anyone in their own separate models. One of the novel techniques applied here was to use multiple hidden layers, rather than just using one layer as is done in Word2Vec, and stack or concatenate them as the resulting embedding vectors. This enables the embedding to learn different levels of linguistic representation at different layers. For example, an early layer closer to the input layer might learn to focus on primitive information such as distinguishing nouns from verbs. A later layer closer to the output might learn to focus on higher-level information such as distinguishing “Janine” from “Jamie.” Again, what is magical about this is that the training data is all easily obtained and configured since it is done through the unsupervised reading of text.

The next powerful development in NLP deep learning was the so-called Transformer. This architecture uses multiple stacked layers of attention mechanisms so that it can more dynamically attend to multiple items within the input data, while also tracking the spatial or time order of multiple inputs. This structure allows it to reproduce the same sequence-to-sequence performance of recurrent neural networks…without the recurrence. Said another way, it can look at a whole sentence at once, rather than look at one word at a time like LSTMs or GRUs, which zip through a sentence from end to end like a ribosome reading a snippet of RNA (for any biology fans out there). This makes the Transformer much faster to train and run for predictions while providing the same accuracy as powerful RNN models.

BERT model.

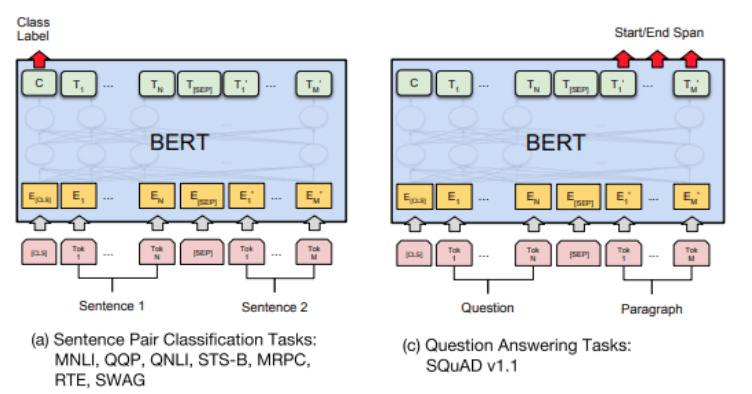

The newest model from the folks at Google AI Research takes the use of Transformers to the next level. This new model, BERT (Bidirectional Encoder Representations from Transformers) is being called by some the best NLP model ever. It uses Transformers to train on multiple input sentences at once, so it has access to a wider context than many previous models. The training process also uses a novel “masking” task, meaning that certain words in the input sentences are removed and held aside as truth data labels so the model can learn to predict them. This masking task helps to generate a superb pre-trained, general-purpose NLP model, which the paper’s authors proceed to fine-tune and transfer train for specific tasks. The output layer can be fine-tuned in several, flexible ways. See below left how they use a special class label token (labeled “C”) for classification tasks, or below right where they train the output layer to predict word-spans for question answering tasks.

Architecture diagram and different fine-tuning techniques for BERT model.

On the GLUE NLP benchmark leaderboard, these fine-tuned models far exceed the state of the art for most of the task-specific benchmarks in the industry. Most impressively, exceeding human-level performance on The Stanford Question Answering Dataset (SQuAD v1.1).

Now that we have the pre-trained models and code for BERT from the published github repo, we have found it relatively easy to transfer-train it with our enterprise and industry-specific tasks and datasets, and plug it into our ensemble of open source and in-house models that power the Capacity platform’s natural language understanding.

These are exciting times to be developing NLP systems, so stay tuned as we post new updates!